Conductance (graph theory)

| Part of a series on | ||||

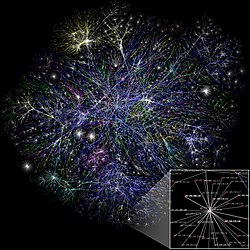

| Network science | ||||

|---|---|---|---|---|

| Network types | ||||

| Graphs | ||||

|

||||

| Models | ||||

|

||||

| ||||

In theoretical computer science, graph theory, and mathematics, the conductance is a parameter of a Markov chain that is closely tied to its mixing time, that is, how rapidly the chain converges to its stationary distribution, should it exist. Equivalently, the conductance can be viewed as a parameter of a directed graph, in which case it can be used to analyze how quickly random walks in the graph converge.

The conductance of a graph is closely related to the Cheeger constant of the graph, which is also known as the edge expansion or the isoperimetic number. However, due to subtly different definitions, the conductance and the edge expansion do not generally coincide if the graphs are not regular. On the other hand, the notion of electrical conductance that appears in electrical networks is unrelated to the conductance of a graph.

History

[edit]The conductance was first defined by Mark Jerrum and Alistair Sinclair in 1988 to prove that the permanent of a matrix with entries from {0,1} has a polynomial-time approximation scheme.[1] In the proof, Jerrum and Sinclair studied the Markov chain that switches between perfect and near-perfect matchings in bipartite graphs by adding or removing individual edges. They defined and used the conductance to prove that this Markov chain is rapidly mixing. This means that, after running the Markov chain for a polynomial number of steps, the resulting distribution is guaranteed to be close to the stationary distribution, which in this case is the uniform distribution on the set of all perfect and near-perfect matchings. This rapidly mixing Markov chain makes it possible in polynomial time to draw approximately uniform random samples from the set of all perfect matchings in the bipartite graph, which in turn gives rise to the polynomial-time approximation scheme for computing the permanent.

Definition

[edit]For undirected d-regular graphs without edge weights, the conductance is equal to the Cheeger constant divided by d, that is, we have .

More generally, let be a directed graph with vertices, vertex set , edge set , and real weights on each edge . Let be any vertex subset. The conductance of the cut is defined viawhereand so is the total weight of all edges that are crossing the cut from to andis the volume of , that is, the total weight of all edges that start at . If equals , then also equals and is defined as .

The conductance of the graph is now defined as the minimum conductance over all possible cuts:Equivalently, the conductance satisfies

Generalizations and applications

[edit]In practical applications, one often considers the conductance only over a cut. A common generalization of conductance is to handle the case of weights assigned to the edges: then the weights are added; if the weight is in the form of a resistance, then the reciprocal weights are added.

The notion of conductance underpins the study of percolation in physics and other applied areas; thus, for example, the permeability of petroleum through porous rock can be modeled in terms of the conductance of a graph, with weights given by pore sizes.

Conductance also helps measure the quality of a Spectral clustering. The maximum among the conductance of clusters provides a bound which can be used, along with inter-cluster edge weight, to define a measure on the quality of clustering. Intuitively, the conductance of a cluster (which can be seen as a set of vertices in a graph) should be low. Apart from this, the conductance of the subgraph induced by a cluster (called "internal conductance") can be used as well.

Markov chains

[edit]For an ergodic reversible Markov chain with an underlying graph G, the conductance is a way to measure how hard it is to leave a small set of nodes. Formally, the conductance of a graph is defined as the minimum over all sets of the capacity of divided by the ergodic flow out of . Alistair Sinclair showed that conductance is closely tied to mixing time in ergodic reversible Markov chains. We can also view conductance in a more probabilistic way, as the probability of leaving a set of nodes given that we started in that set to begin with. This may also be written as

where is the stationary distribution of the chain. In some literature, this quantity is also called the bottleneck ratio of G.

Conductance is related to Markov chain mixing time in the reversible setting. Precisely, for any irreducible, reversible Markov Chain with self loop probabilities for all states and an initial state ,

- .

See also

[edit]Notes

[edit]- ^ Jerrum & Sinclair 1988, pp. 235–244.

References

[edit]- Jerrum, Mark; Sinclair, Alistair (1988). Conductance and the rapid mixing property for Markov chains: the approximation of permanent resolved. ACM Press. doi:10.1145/62212.62234. ISBN 978-0-89791-264-8.

- Béla, Bollobás (1998). Modern graph theory. GTM. Vol. 184. Springer-Verlag. p. 321. ISBN 0-387-98488-7.

- Kannan, Ravi; Vempala, Santosh; Vetta, Adrian (2004). "On clusterings: Good, bad and spectral". Journal of the ACM. 51 (3): 497–515. doi:10.1145/990308.990313. ISSN 0004-5411.

- Chung, Fan R. K. (1997). Spectral Graph Theory. Providence (R. I.): American Mathematical Soc. ISBN 0-8218-0315-8.

- Sinclair, Alistair (1993). Algorithms for Random Generation and Counting: A Markov Chain Approach. Boston, MA: Birkhäuser Boston. doi:10.1007/978-1-4612-0323-0. ISBN 978-1-4612-6707-2.

- Levin, David A.; Peres, Yuval (2017-10-31). Markov Chains and Mixing Times: Second Edition. Providence, Rhode Island: American Mathematical Soc. ISBN 978-1-4704-2962-1.

- Cheeger, Jeff (1971). "A Lower Bound for the Smallest Eigenvalue of the Laplacian". Problems in Analysis: A Symposium in Honor of Salomon Bochner (PMS-31). Princeton University Press. pp. 195–200. doi:10.1515/9781400869312-013. ISBN 978-1-4008-6931-2.

- Diaconis, Persi; Stroock, Daniel (1991). "Geometric Bounds for Eigenvalues of Markov Chains". The Annals of Applied Probability. 1 (1). Institute of Mathematical Statistics: 36–61. ISSN 1050-5164. JSTOR 2959624. Retrieved 2024-04-14.